Les activités de l’équipe se déclinent tout d'abord selon des recherches fondamentales en traitement des données et apprentissage statistique qui lui confèrent un puissant ancrage dans la communauté des Sciences et Technologie de l’Information. Les compétences et outils qu’elle développe sont ensuite apliquées afin de réaliser un traitement au niveau de l’état de l’art des données issues des sciences de la planète et de l’Univers.

Domaines des contributions méthodologiques majeures de l'équipe

|

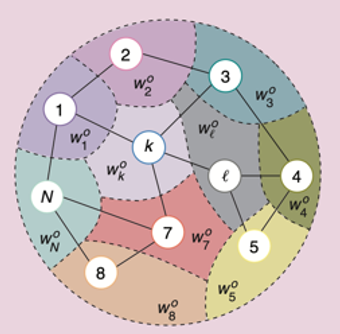

Traitement distribué et en ligne de l’information |

|

Traitement des cubes hyperspectraux |

|

|

Traitement statistique du signal et problèmes inverses |

|

|

Machine Learning |

Domaines d'application privilégiés

|

La résolution de problèmes inverses | |

|

Le traitement de données pour la radioastronomie, dans la perspective de SKA |

|

|

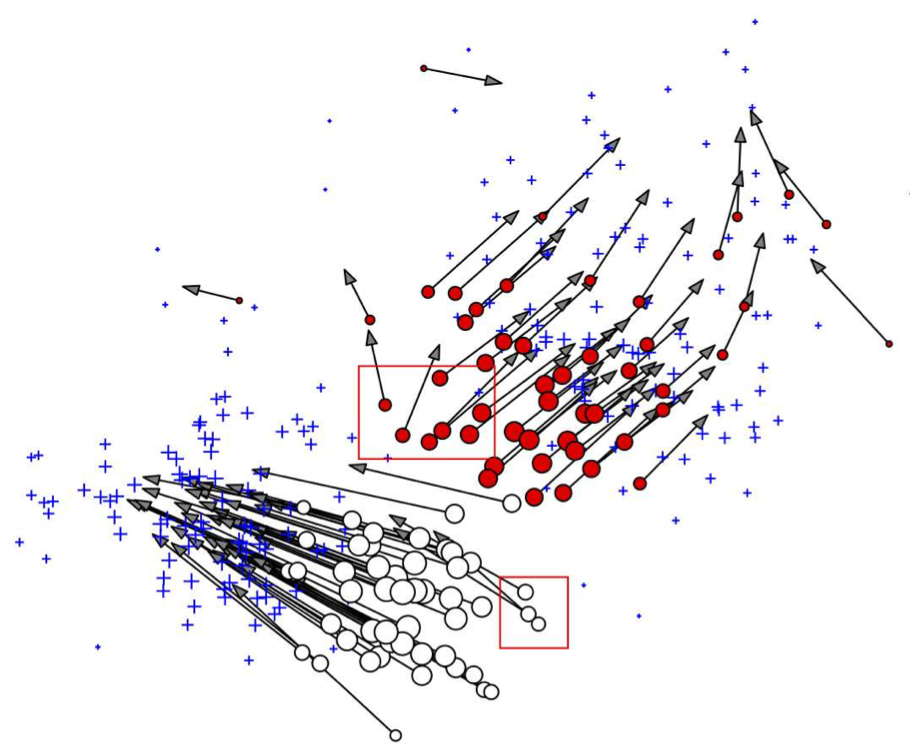

L'application de techniques d'apprentissage statistique à des problèmes de cosmologie |

|

|

L'utilisation de la technologie DAS pour les villes intelligentes |

|

|

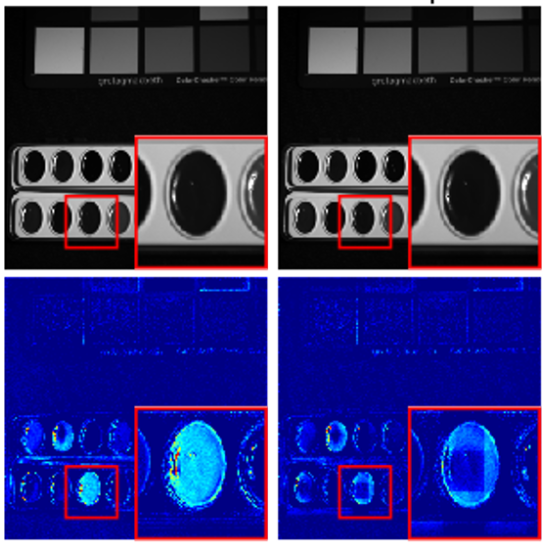

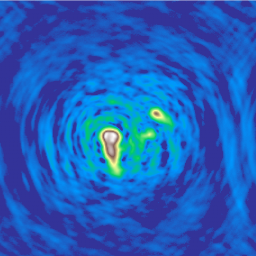

L'analyse de coronographes Solaire et stellaires |

L'équipe intéragit avec l'institut 3IA Côte d'azur

Les recherches de l'équipe sont menées en partie grace au soutien de l'Agence Nationale de la Recherche

|

|